Claw on Cloud: How We Run Stateful AI Agents on a Stateless Edge

When you ask our investment research agent a follow-up question — "now run the same analysis for their main competitor" — something has to remember the last hour of conversation. The prior reasoning, the data it pulled, the half-finished scratchpad. If the agent forgets all that and starts fresh every time, the experience falls apart.

That's the problem this post is about. It's a familiar one: AI agents carry a lot of context, and the cloud prefers things that don't. Most modern infrastructure is built to be stateless — any server can handle any request — because that's what makes it fast, cheap, and resilient. Agents push hard against that grain.

We want to talk about how we resolved the tension, and the two or three design choices we're proudest of. Not a tour of our stack. Just the ideas.

A quick note before the architecture. The agent runtime at the heart of our system is OpenClaw, the open-source agent infrastructure. We didn't build that part — we build on it. OpenClaw gives us a gateway, a session model, and a tool execution environment; what this post is about is everything we wrapped around it to make it work as a hosted, multi-user product on a stateless edge. The interesting design decisions are in that wrapper, not in the runtime underneath.

The shape of the system

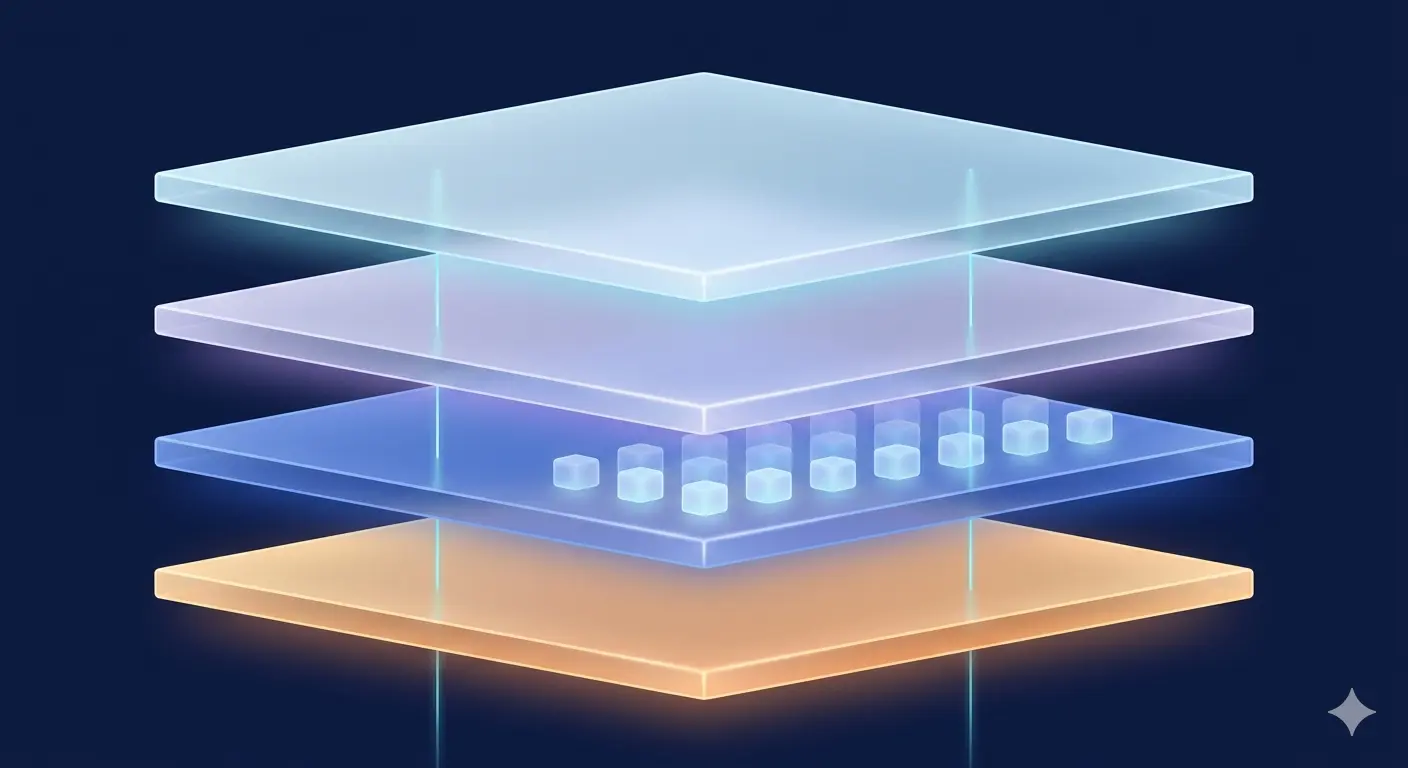

There are three layers, and the trick is keeping them honest about what they own.

At the edge, a thin request handler that knows nothing and remembers nothing. It's the only thing the public internet talks to. Every request looks identical to it.

In the middle, an orchestrator that decides where each request should go. This is where the interesting decisions happen.

At the back, containers running the actual agent. Each container can hold one user's working context at a time — their conversation, their tools, their scratch space.

And underneath all of it, durable storage that holds the truth about every user's state, regardless of which container is alive at any given moment.

The handler holds nothing. The container holds everything for now. Storage holds everything for keeps. That separation is the whole game.

Fig 1: The three-layer architecture with state ownership.

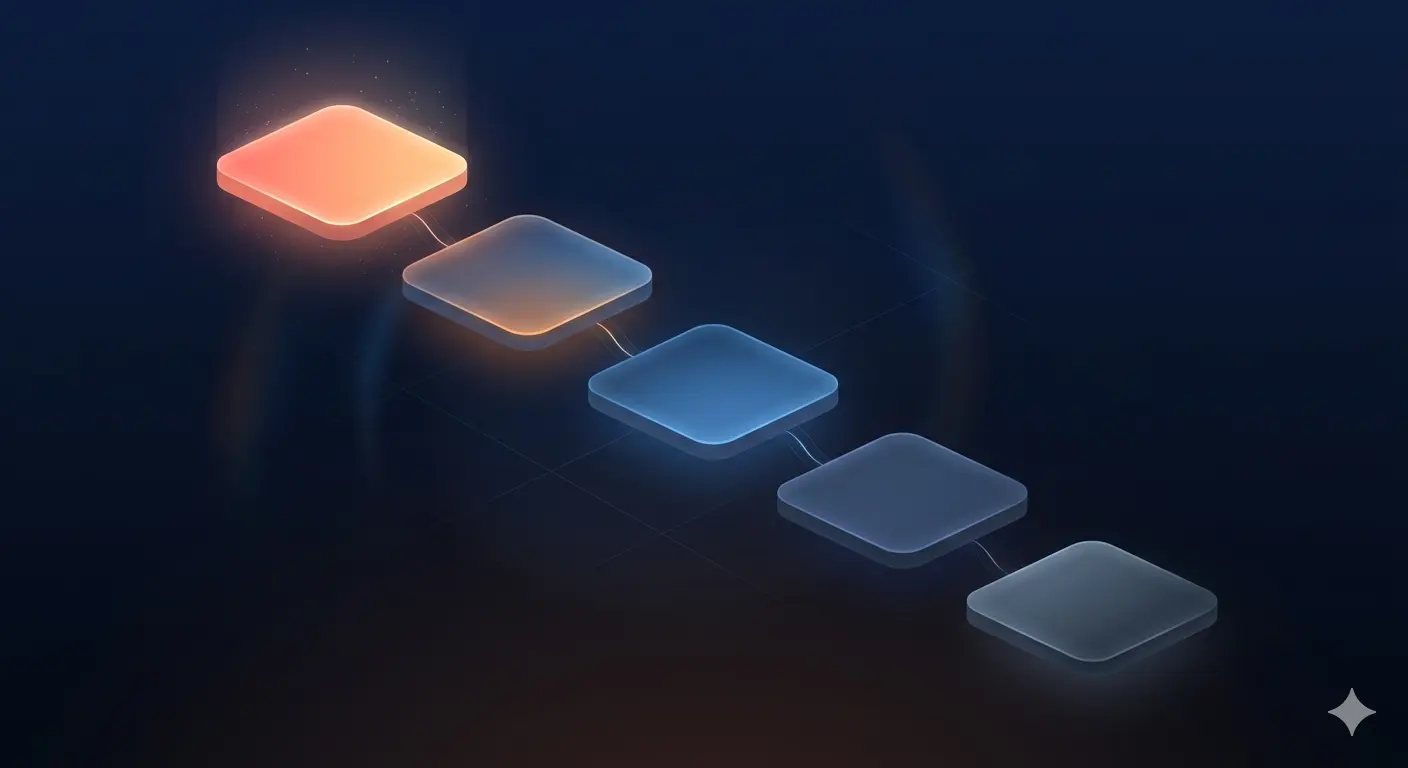

The bit we're proudest of: a preference ladder, not a leash

Here's the design problem in plain language.

You can do this the easy way: every request goes to whichever container is free. Simple, robust, and terrible for agents — because the next container probably doesn't remember the user, and reloading their context from storage takes time the user can feel.

You can do it the other easy way: pin each user to a fixed container. Fast when it works, fragile when it doesn't. Containers die. Users go quiet for hours. Deploys happen. Pinning sounds clean on a whiteboard and turns into 3 a.m. pages in production.

What we do instead is a preference ladder. When a request comes in, the orchestrator walks down a list of options in order — from "the best possible container for this user right now" to "any container at all" — and takes the first one that fits.

Fig 2: Preference ladder: from the best possible container for this user right now to any container at all.

The top of the ladder is the user's own container, if it's still working on something for them. Just queue behind it. Slightly below that: a container they were just using, held briefly in reserve in case they send a follow-up. (They almost always do.) Below that: any container that has their state warm. Below that: any idle container, willing to swap in their state from storage. At the bottom: spin up a new one.

The reserved-window tier is the unsung hero. It's tuned to match the natural rhythm of a chat — read the answer, think, type a follow-up — and it absorbs the overwhelming majority of follow-up requests with zero context reload. The user feels continuity. The container gets reused. Storage doesn't get hammered.

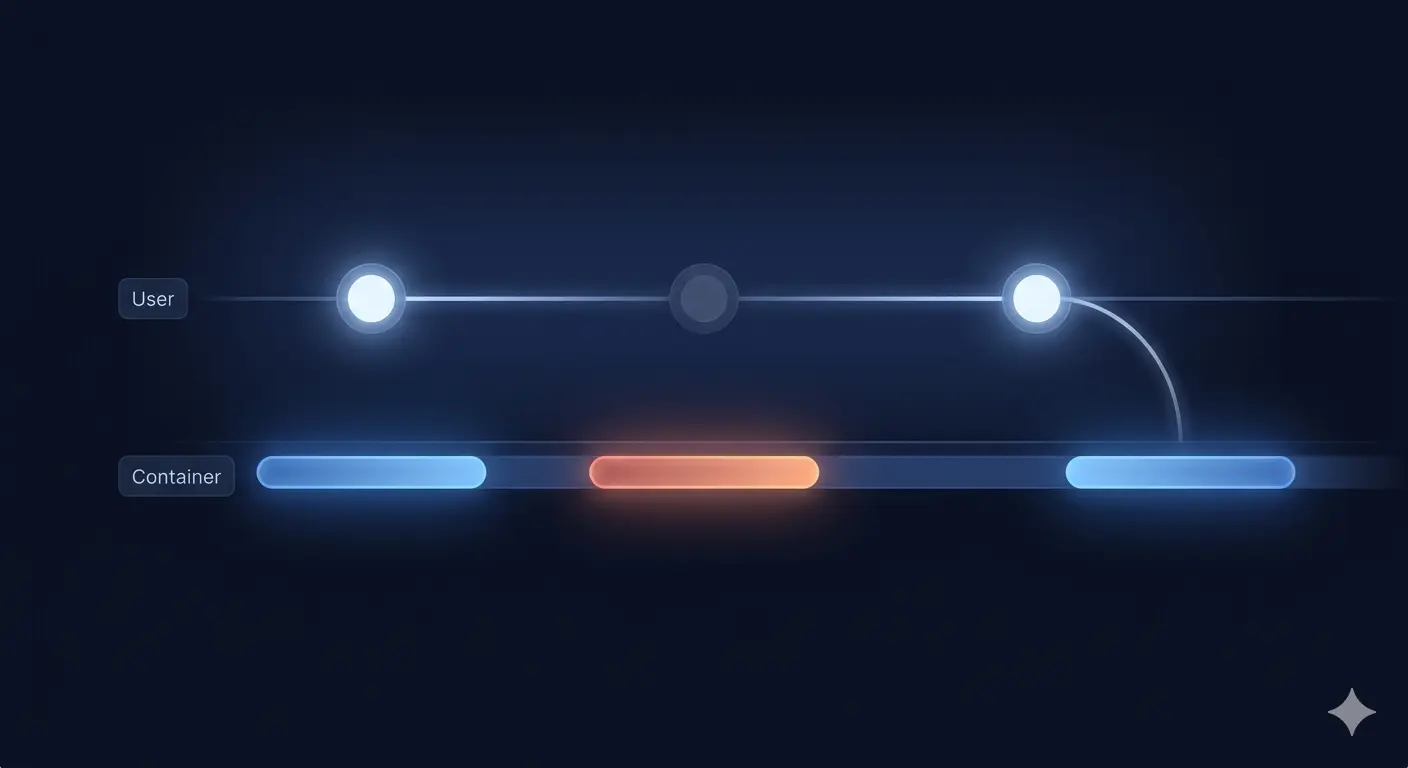

Fig 3: The reserved-window timeline.

The whole thing also degrades gracefully. Under heavy load, the orchestrator slides further down the ladder — more swaps, more cold starts — but no request fails for lack of capacity. Under light load, almost everything lands at the top. Crucially, nothing is pinned: a user's affinity to their container is a preference, not a contract. If the container dies, the system shrugs and finds another one.

That distinction — preference, not pinning — is the design choice that pays the most dividends. It gives us the speed of stickiness with the resilience of statelessness.

Three places for state, one source of truth

The ladder only works because state lives in three places at once, with very clear rules about who owns what.

Durable storage owns the truth. Every user has a complete snapshot sitting safely in cloud storage — their conversations, their tool configurations, their working notes. If every container in the world died right now, no user would lose anything.

The container owns the working copy. During an active session, the agent reads and writes to its own local files. Fast, no network in the loop.

A background sync keeps them honest. A few seconds at a time, the container pushes changes back to storage. When a request finishes, it pushes a full backup as a safety net.

This is what lets us treat containers as cattle while giving each user the experience of a long-lived, attentive assistant. A container can sleep, get replaced, or get reaped — and the next request just lands on whatever container the ladder picks, restores from storage, and carries on. There's no orchestration scar tissue to maintain, because there's nothing to keep in sync beyond the storage layer itself.

There's also a smaller but related choice: long-running agent tasks don't make the request handler wait. The handler hands off, the container does its work in the background, and a callback wakes the handler when the answer is ready. This sounds like a small detail. In practice, it's the difference between a system that survives an unusually thoughtful research run and one that times out at the worst possible moment.

Behavior in plain text, infrastructure in code

One more idea worth mentioning, because it has saved us more headaches than anything on the list above.

The way our agents think — how they plan a piece of investment research, when they reach for which data source, how they structure an analysis — is defined in plain markdown files, not code. To change the agent's behavior, an engineer or analyst edits a file and pushes it. The next time anyone uses the agent, they get the new behavior.

No rebuild. No deploy. No version migration. The agent's "personality" is hot-swappable because it's just text, and text is exactly what cloud storage is good at.

This sounds almost too simple to be worth a paragraph, but it's a quiet superpower. Product changes to how the agent thinks don't require infrastructure work. Infrastructure changes don't risk product regressions. The two move at their own speeds and rarely step on each other. Anyone who has worked in a system where every behavior tweak required a full deploy will recognize what we're describing.

The takeaways

There's plenty more under the hood — image builds, metrics, scheduled jobs, autoscaling, ops tooling. We'll write about some of it another time. None of it is where the interesting design decisions were.

The interesting decisions were these:

Don't pin users to containers — prefer them. A preference ladder gets you most of the affinity benefit with none of the operational pain. Pinning is a contract you'll regret signing.

Three places for state, one source of truth. Durable storage owns the truth. Containers hold the working copy. A quiet background sync keeps them aligned. State that lives in only one place is fragile; state that lives everywhere with no owner is chaos.

Behavior in plain text, infrastructure in code. Decoupling what the agent does from how the agent runs means the two can evolve independently. Most of the time, that's worth more than any individual optimization.

If you're building agent infrastructure — or thinking about whether to — those three ideas are what we'd hand you on a napkin. The rest is details.

Building something similar, or curious how this looks in practice? Get in touch.